by Allister John Marran

|

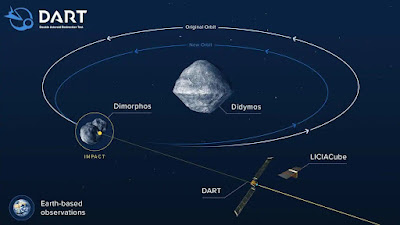

| NASA's Double Asteroid Redirection Test, 26 September 2022 |

We have striven to become significantly more fallible by sitting at the camp fire exchanging stories, choosing to first believe and then explore, a honeycomb of fictional realities.

Our little rock doesn't stand still. It moves at thousands of kilometres an hour around the sun, while rotating constantly on its own axis, with gravity pulling eternally—and yet some very clever people place explosives in a cylinder and fire it upwards, breaking free of our planet and then moving at thirty times the speed of sound to another celestial body which is also rotating around the sun on its own axis.

They aim the rocket at an empty point in space, knowing that the other planet will arrive at the exact moment the rocket does.

We can do all of that, and do it safely and reliably, not because of faith or emotion, not because of belief or trust. The numbers tell them it will be there, every time, to the second.

Science and mathematics do not care about your feelings or your complex personal belief structures. They do not worry about offending people or massaging ones’ scruples. It simply and succinctly solves a physical or theoretical problem as efficiently as possible.

Mathematics is the universal language. Unchanging and uncompromised.

But emotion and belief and trust are the language of mankind, it's what makes us human, a most endearing quality that allows love and hate, care and neglect, laughter and crying, and great triumph and cruelty.

The great works of Shakespeare and Tolkien and King and Koontz could simply not be written in the language of maths. They require a suspension of disbelief and an emotional core.

Because human behaviour hardly ever adds up.

But our strength is our weakness, and it's the exploitation of these analogue traits which has led us to place a greater importance on our beliefs than the facts.

More than ever, nefarious actors are taking the political, religious or social stage, and asking you to forget the truth, ignore the facts, trample the math, destroy the science and just believe them.

Trust them implicitly. Don't over-think, don't look too deeply, don't add it up or use common sense to interrogate the facts. Just trust them.

And so we now live in an age where conspiracy theoriests can mobilise an army, televangelists can ask their congregation for another eight hundred million to buy another jet, politicians can command more loyalty the more they lie and cheat and thieve, and Finding Bigfoot can enter a twelfth season without ever finding Bigfoot.

It's not necessary to destroy your humanity in order to defeat these exploitative forces trying to cajole you into believing nonsense. You don't have to stop your suspension of disbelief, or temper your emotion, or stop loving the ones you love.

You just need to compartmentalise or segment various types of knowledge and activity, and treat each one a little differently.

When you read Shakespeare or watch a romantic comedy or praise your God or watch your football team, let it all out, go to town, laugh and weep and give it your best.

But don't ever give a person the keys to your soul or your belief structure. Don't allow a politician to get you worked up. Don't let your guard down when you need to keep your wits.

Know when to use the language of people or the language of maths and science. Become fully bilingual and know when to change between the two.